Authors

Elliot Gunn

Product Marketing Manager

Apache Airflow 2 reaches end of life in April 2026. For most teams, the default response is to upgrade to Airflow 3 and move on. Airflow’s own open issue tracker hints at a different outcome: DAGs not deactivating after migration, auth failures on fresh installs, broken task dependencies from changed behavior. The upgrade that looks straightforward on paper has already produced real friction in practice.

That challenge gets amplified for teams with years of accumulated DAGs that have their own workarounds and undocumented assumptions. No official migration guide will account for any of that.

That’s the situation EOL will drop you into. I’ll cover what it actually means, then explain why the upgrade-by-default is worth pausing on before you commit several engineering weeks, or more likely months, to it.

After April 22, no more security patches, bug fixes, or provider updates for the 2.x line. If a vulnerability surfaces in a dependency, you’ll be on your own.

Running unsupported software in a production data environment isn’t a risk most organizations will accept for long, especially those with compliance requirements. So the question becomes: should you upgrade or look elsewhere?

📘 Want a structured decision framework? Our free guide covers what changed in Airflow 3, where the architecture breaks, and how to migrate incrementally — whether you stay on Airflow or switch. Download the Airflow 2 EOL Guide →

Airflow 3 is not a drop-in upgrade. The official migration guide lists several breaking changes:

apache-airflow to apache-airflow-providers-standard. Your import statements need updating.These aren’t trivial changes. Each one is Airflow-specific work: knowledge you’re investing back into the Airflow ecosystem rather than into something transferable.

Orchestration decisions tend to calcify. Teams pick a tool, build around it, train people on it, and then live with it for years because the migration cost or perceived risk is always too high to justify.

EOL is an opportunity to reconsider that decision. Switching costs are lower right now than they will be after teams spend several weeks on Airflow-specific work and lock themselves in for another decade.

Many teams will upgrade without seriously evaluating alternatives because that could genuinely be the right call for some of them. But it’s worth asking the question before committing several engineering weeks to Airflow-specific work that won’t transfer anywhere else.

If you want a broader view of what’s available at this crossroads, we’ve covered enterprise Airflow alternatives in a separate post.

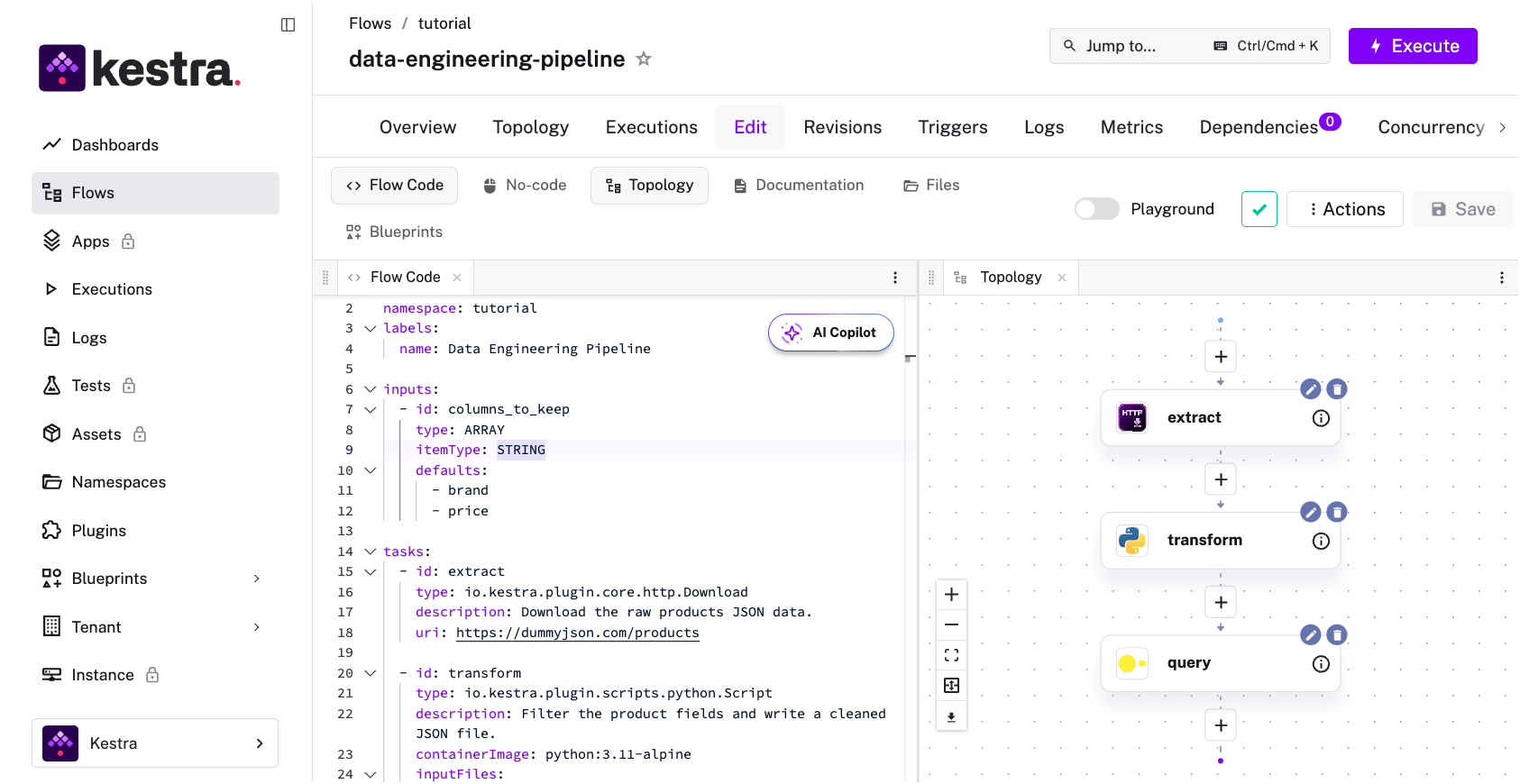

At this point teams generally go one of two directions: stay in the Airflow ecosystem with a managed offering like MWAA or Astronomer, or look at what declarative alternatives look like for data engineering. For Python-native teams, Dagster and Prefect often come up since they’re modern upgrades from Airflow, but both are still Python-first, which means the same constraint on who can own workflows carries over. Kestra is a different kind of bet: declarative workflows defined in YAML, with your existing Python scripts, SQL queries, and Shell commands running unchanged inside tasks, in isolated containers, without framework wrappers. The orchestration layer changes; your code doesn’t.

The migration lift is comparable to upgrading to Airflow 3. The outcome is different: instead of Airflow DAGs written in Python, you have YAML that any team member can read and modify, with tasks that run in any language. Same weeks of work; different decade ahead of you.

| Upgrade to Airflow 3 | Migrate to Kestra | |

|---|---|---|

| Migration effort | Refactor SubDAGs, update imports, restructure deployment (API server + DAG processor) | Convert DAGs to YAML flows, existing scripts run unchanged in isolated containers |

| Language support | Python only — orchestration and business logic are coupled | Any language — Python, SQL, Shell, R, Node.js, Go. Orchestration (YAML) is decoupled from execution |

| Who can own workflows | Python developers only | Anyone who can read YAML, plus developers writing tasks in any language |

| Deployment model | Multi-component: API server, DAG processor, scheduler, metadata DB, workers | Single Docker Compose for production-shaped setup, or Kubernetes-native |

| Event-driven workflows | Airflow 3 adds Asset Watchers, but core remains schedule-driven | Event-driven from the ground up: S3, webhooks, Kafka, API triggers as first-class YAML declarations |

| Vendor lock-in | Tied to Airflow ecosystem, Python operators, Airflow-specific knowledge | Open source (Apache 2.0), language-agnostic, no framework lock-in |

| Timeline | 4–8 weeks for mid-size deployments | 4–8 weeks for mid-size deployments — comparable effort, different outcome |

| What you get | Modernized Airflow with better UI and new features, but same architectural model | Declarative orchestration across data, infra, and business workflows in one platform |

If your team is Python-native and invested in the Airflow ecosystem, the upgrade is probably right. You have institutional knowledge, existing operators, and people trained on the model. The Airflow 3 migration guide is your next step. Start by inventorying your SubDAG usage. That’s typically where the most work is hiding.

If you’ve been frustrated by the Python-only constraint, or your workflows span data, infrastructure, and business processes that Airflow doesn’t serve well: Kestra is open source and takes about five minutes to spin up locally with Docker. The airflow-to-kestra-migration repository shows what a DAG-to-YAML conversion looks like in practice. Our guide on migrating Airflow DAGs to Kestra walks through the process in more detail. Convert one DAG you know well and you’ll have a realistic picture of what a migration involves.

For a detailed feature comparison, the Kestra vs. Airflow page covers the differences across architecture, deployment, and language support.

If your data engineering manager needs to see the architectural tradeoffs and a live DAG-to-YAML migration demo before committing to a direction, send them this link to our recent talk where we covered exactly this decision:

Apache Airflow 2 reaches end of life on April 22, 2026. After this date, no security patches, bug fixes, or provider updates will be released for the 2.x line.

Running unsupported software in production introduces security and compliance risks. Any CVEs discovered in Airflow 2 or its dependencies after EOL won’t receive patches. Organizations with compliance requirements — SOC 2, HIPAA, PCI-DSS — will likely need to migrate to a supported version or platform to maintain their certification.

The biggest breaking changes include: SubDAGs removed entirely (you must convert them to Task Groups or dynamic task mapping), deprecated context variables removed (execution_date, prev_ds, next_ds and others no longer work), provider packages moved from apache-airflow to apache-airflow-providers-standard, and the webserver split into two services (an API server and a DAG processor), which changes your deployment configuration.

It depends on your team and workloads. If you’re a Python-native data engineering team deeply invested in the Airflow ecosystem with existing operators and institutional knowledge, upgrading to Airflow 3 is probably the right call. If you’ve been constrained by Airflow’s Python-only model, if your workflows span data pipelines, infrastructure, and business processes, or if you want non-Python contributors to own workflows, the EOL window is a natural evaluation point for declarative alternatives like Kestra.

Both upgrading to Airflow 3 and migrating to an alternative typically take 4 to 8 weeks for a mid-size deployment (50-200 DAGs), depending on the number of custom operators, undocumented workarounds, and integration complexity. The airflow-to-kestra-migration repository provides a concrete reference for what a DAG-to-YAML conversion looks like.

EOL is a rare moment when the status quo actually costs you something. Use this opportunity to make the decision deliberately.

Stay up to date with the latest features and changes to Kestra