Kestra has streamlined our data processes, reduced costs, and significantly enhanced our scalability and efficiency. It has truly been a critical asset in our digital transformation journey.

Ship Data Pipelines in 5 Minutes.

Run Them at Enterprise Scale

One platform for data, AI, and business-critical workflows.

Data Orchestration Shouldn’t Be Hard

The challenge

- Airflow gives you dependency conflicts, executor tuning, infra complexity instead of building pipelines.

- Analysts use SQL. ML engineers use Python. Ops use Bash. But your orchestrator only speak Python.

- Weeks learning DSL patterns before shipping one pipeline.

How Kestra solves it

- Docker install and you’re running. No dependency management, no executor configuration. Just workflows.

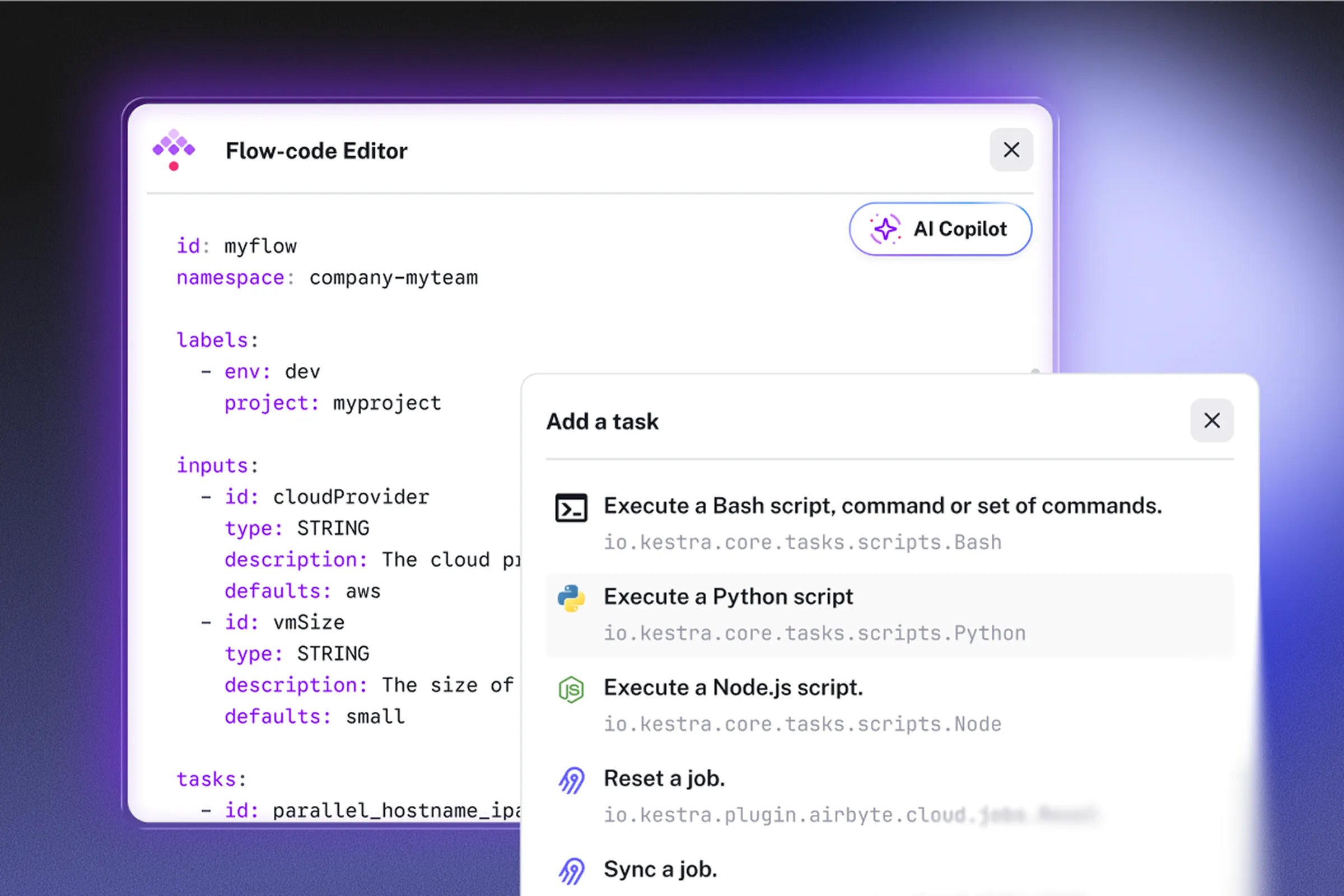

- Write tasks in any language. YAML orchestrates, your code stays native. No wrappers, no refactoring required.

- Know YAML? You’re ready. Pick a Blueprint and ship in under 5 minutes. Zero proprietary abstractions.

Built For How Data Teams Actually Work

Any Language, Native Execution

Start From Blueprints, Ship in Minutes

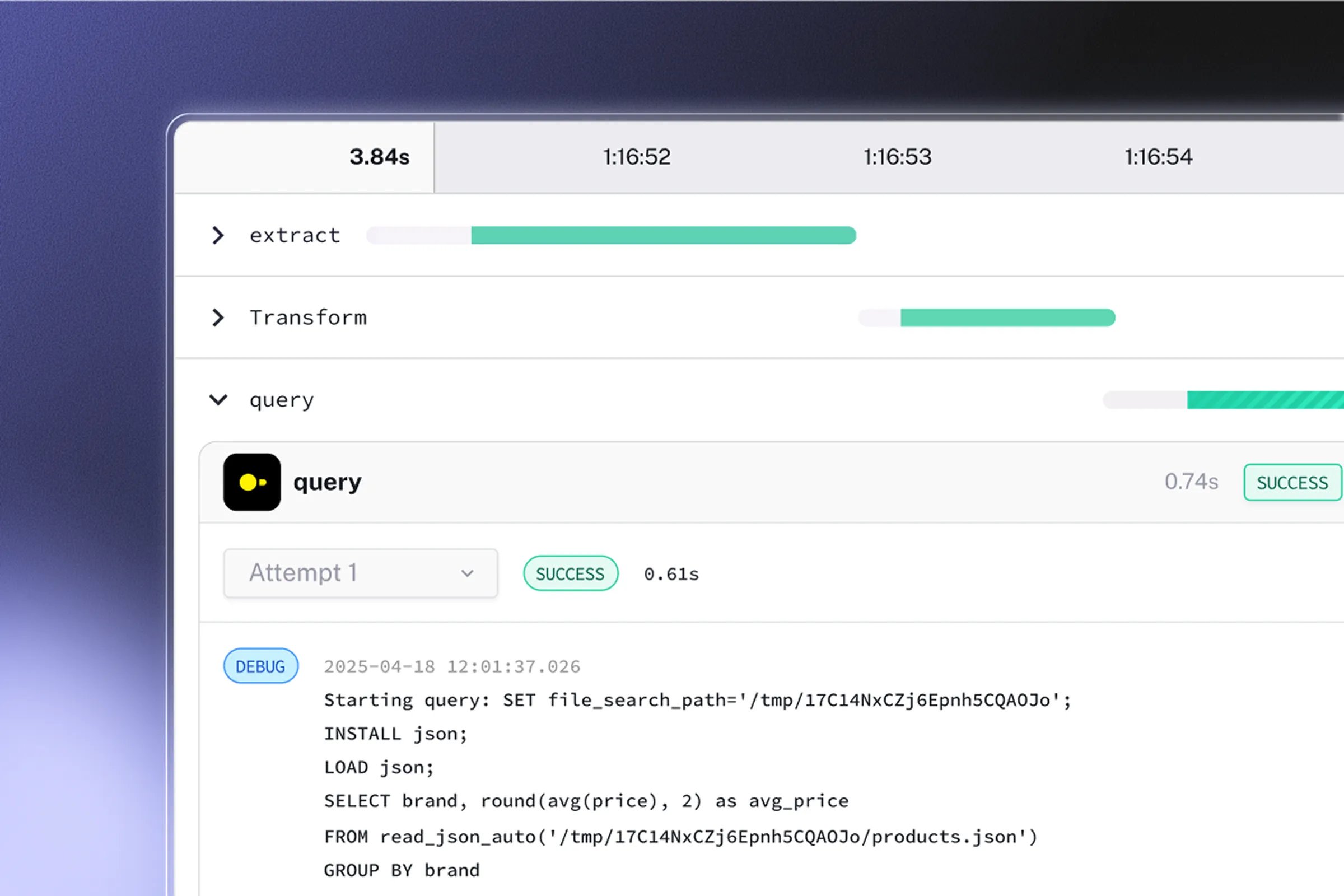

Enterprise-Grade Observability

Built For The Stack You Run in Production

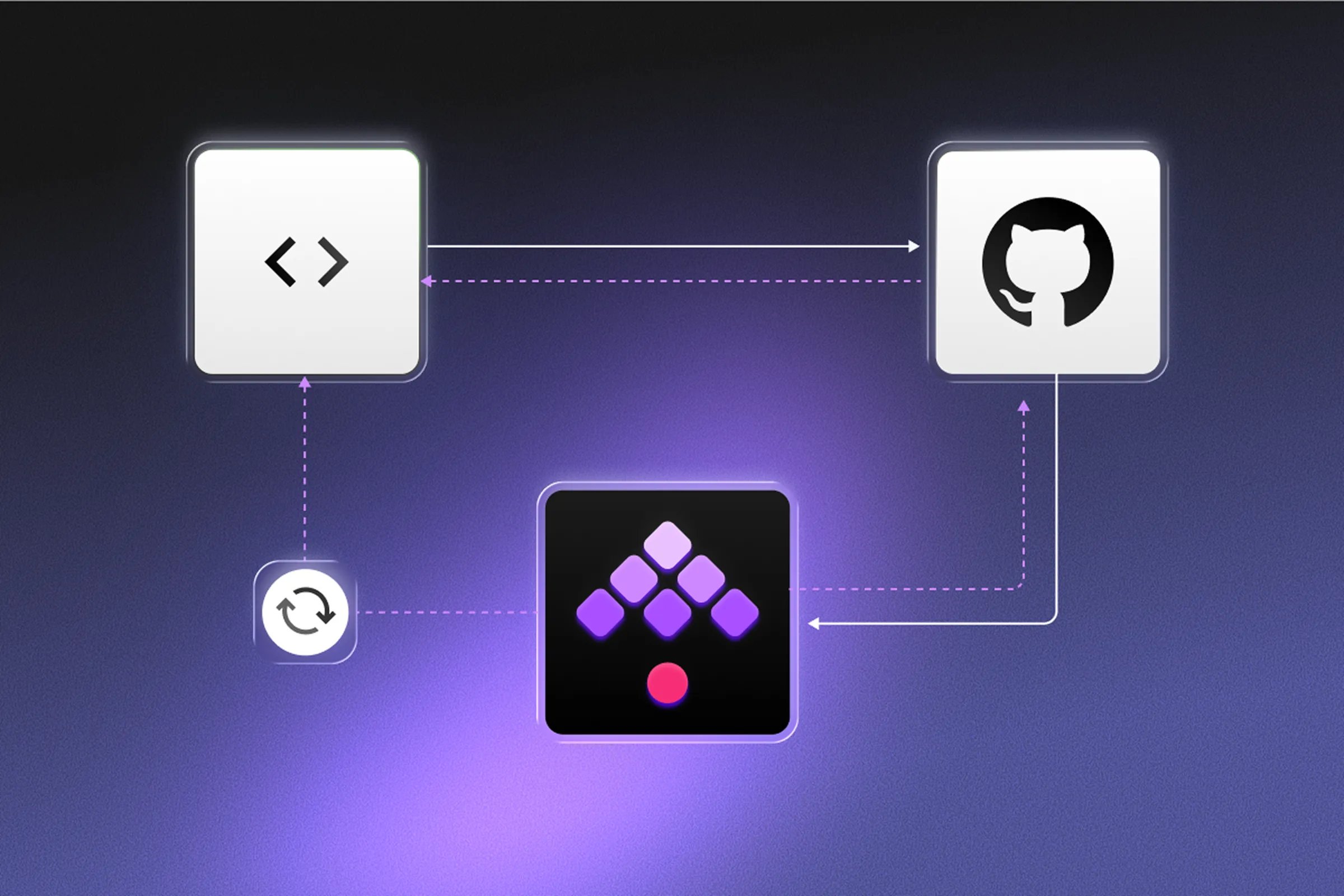

Version Control Native + CI/CD Ready

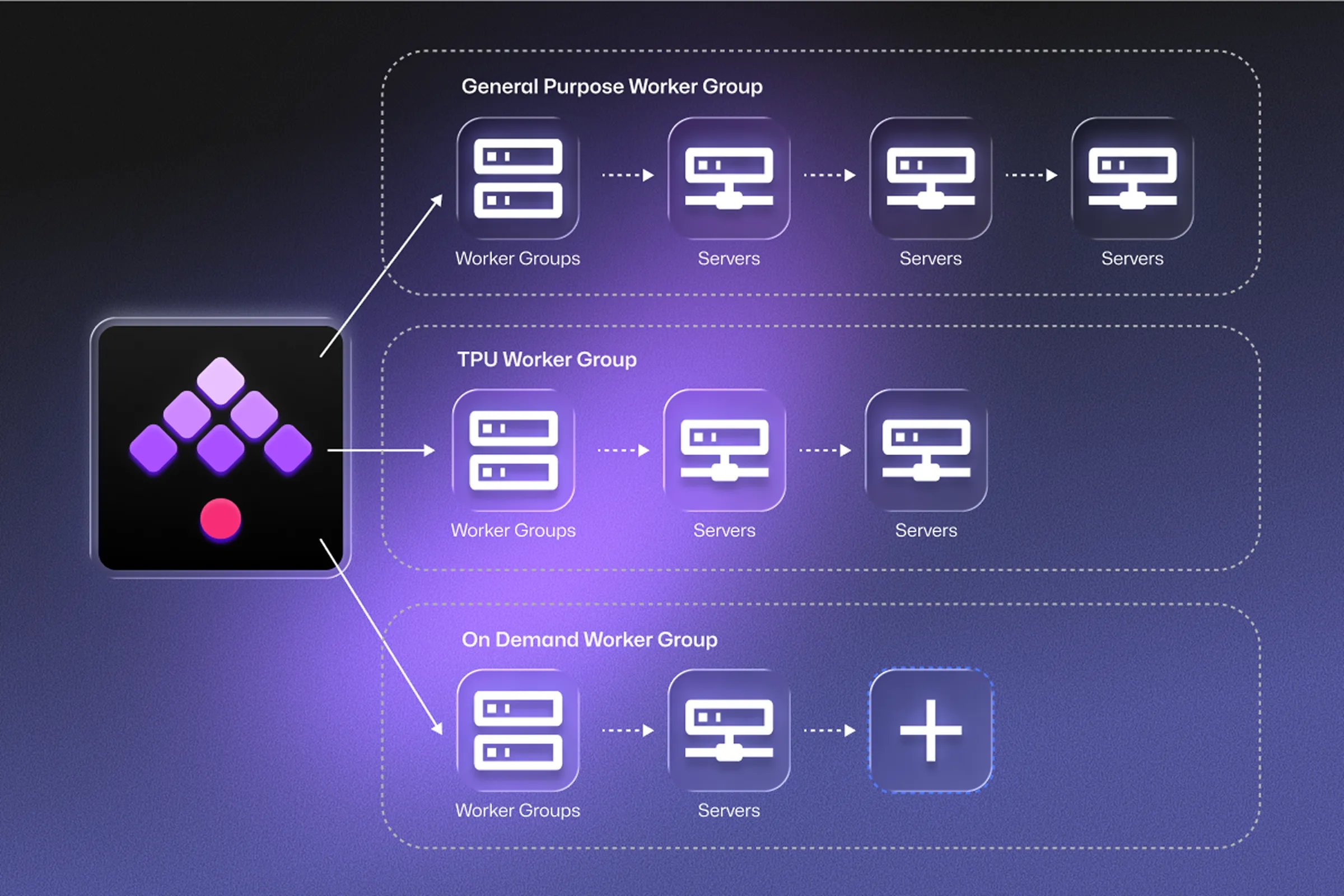

Scale From Prototype to Enterprise

Cut Orchestration Costs By 80% *

Reported by teams switching from Airflow to Kestra in production.*

Infrastructure costs

Self-hosted Kestra runs on minimal infrastructure. No overprovisioned workers or complex component scaling. Deploy on your existing Kubernetes cluster or a single VM.

Engineering time

Eliminate weeks of operational overhead. No upgrades breaking plugins, no dependency conflicts, no executor tuning. Ship pipelines, not infrastructure.

Licensing and managed services

Many orchestrators lock enterprise features behind expensive managed plans. Kestra delivers them in open source or flexible commercial licensing.

From Prompt to Production in 60 Seconds

Kestra Copilot turns natural language into working pipelines using dbt, Airbyte, Spark, Snowflake, BigQuery, Databricks, and 1400+ plugins.

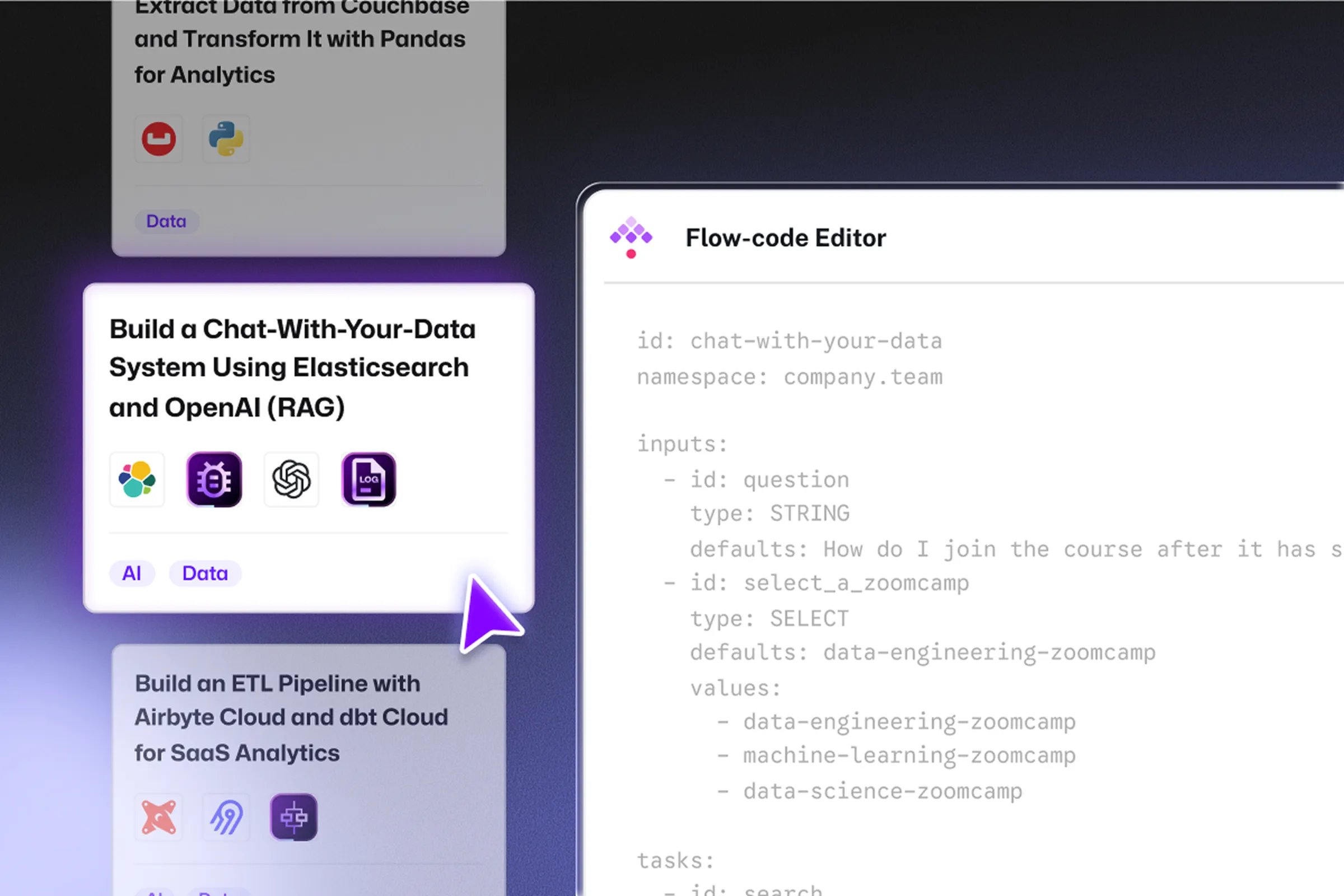

Start From Proven Data Patterns

Pick from our production-ready Blueprints for common data workflows. Copy, customize, deploy in minutes.

1400+ Plugins To Works Automate

Fivetran

Snowflake

Databricks

Azure

DBT

Airbyte

MongoDB

Cassandra

PostgreSQL

MySQL

Redshift

BigQuery

ClickHouse

Redis

Elasticsearch

Kafka

Pulsar

RabbitMQ

Fivetran

Snowflake

Databricks

Azure

DBT

Airbyte

MongoDB

Cassandra

PostgreSQL

MySQL

Redshift

BigQuery

ClickHouse

Redis

Elasticsearch

Kafka

Pulsar

RabbitMQ

Kestra is Not Just Another Orchestrator

| | Legacy orchestrators (e.g. Airflow) | Modern alternatives | Why this matters | |

|---|---|---|---|---|

| Workflow definition | Declarative YAML | Python DAGs | Python SDKs with custom abstractions | Your existing scripts work as-is. Zero migration tax. |

| Languages supported | Any (Python, SQL, R, Bash, etc.) | Python only | Python only | Analysts use SQL, engineers use Python, ops use Bash. |

| Time to first pipeline | < 5 minutes | 1-2 hours | 30-60 minutes | Ship 10x faster. Deliver value day one. |

| Learning curve | YAML (immediate) | Framework + infrastructure setup | Framework concepts and abstractions | Junior engineers ship pipelines their first day. |

| Live DAG preview | Yes, real-time | No (deploy to see) | No (deploy to see) | See changes instantly. No deploy-to-preview cycles. |

| Event-driven triggers | Native, unlimited | Limited sensors (polling-based) | Yes, native support | React in seconds, not minutes, with real-time workflows. |

| Execution isolation | Containers per task | Shared workers | Configurable | No dependency conflicts. Ship fearlessly. |

| Built-in observability | Metrics, logs, tracing included | Requires manual setup | Setup required or cloud-dependent | Debug in minutes with full visibility out of the box. |

| Multi-language team support | SQL, Python, Bash all native | Python wrappers required | Python wrappers required | Everyone contributes using their native tools. |

| Scope beyond data | AI, infra, business workflows | Data pipelines only | Data pipelines primary focus | Future-proof your platform with one orchestrator for everything. |

| Enterprise deployment | Self-hosted or cloud | Self-managed infrastructure | Self-hosted or cloud-first | 50%+ lower TCO. Less infrastructure, less ops time. |

| Migration path | Incremental (run Airflow + Kestra in parallel) | N/A | Requires full rewrite | Move pipelines one by one, no big-bang cutover. |

Start With Data. Grow Without Limits.

Join 500+ data teams who’ve modernized their orchestration with Kestra.

Frequently asked questions

Find answers to your questions right here, and don't hesitate to Contact Us if you couldn't find what you're looking for.