Authors

Martin-Pierre Roset

Airflow 2 is end-of-life. Whether your team is upgrading to Airflow 3 or evaluating a broader migration, the decision can’t wait much longer. But committing to a direction doesn’t mean committing to a big-bang cutover.

With Kestra, you can transition one workflow at a time. Keep what works in Airflow, move jobs into Kestra incrementally, and avoid the risk of a full rewrite while critical workflows are in production. Kestra’s Airflow plugin lets you trigger and monitor Airflow DAGs directly from within Kestra, giving you a unified control plane across both systems from day one.

I’ll walk through how the plugin works, then show how this fits into a broader migration strategy for teams that can’t afford downtime.

This gradual migration is part of a well-known strategy called the Strangler Fig Pattern, where the new system (Kestra) slowly replaces the old one (Airflow) by taking over its workflows, piece by piece. Over time, more and more workflows run in Kestra, while Airflow’s role diminishes. Eventually, Kestra handles everything.

This approach avoids the risks and complexity of doing a full migration in one go. Instead of uprooting everything at once, you can orchestrate Airflow DAGs within Kestra’s control plane and centralized UI, gaining better visibility and scalability, while continuing to leverage what’s already working in Airflow.

📘 Airflow 2 is no longer maintained. If you’re evaluating whether to upgrade to Airflow 3 or migrate to Kestra, our free Airflow 2 to Kestra migration guide breaks down both paths.

In response to many requests from users seeking support for easier migrations from Airflow, we’ve developed a plugin that lets you trigger and orchestrate Airflow DAGs directly from within Kestra. This makes it possible to run Airflow jobs as part of your Kestra workflows, giving you the flexibility to incorporate your existing DAGs into Kestra’s broader orchestration capabilities.

Here’s an example of how you can use Kestra to trigger an Airflow DAG:

id: airflownamespace: company.team

tasks: - id: run_dag type: io.kestra.plugin.airflow.dags.TriggerDagRun baseUrl: http://host.docker.internal:8080 dagId: hello_world_dag wait: true pollFrequency: PT1S options: basicAuthUser: "{{ secret('AIRFLOW_USERNAME') }}" basicAuthPassword: "{{ secret('AIRFLOW_PASSWORD') }}" body: conf: source: kestra namespace: "{{ flow.namespace }}" flow: "{{ flow.id }}" task: "{{ task.id }}" execution: "{{ execution.id }}"In this setup:

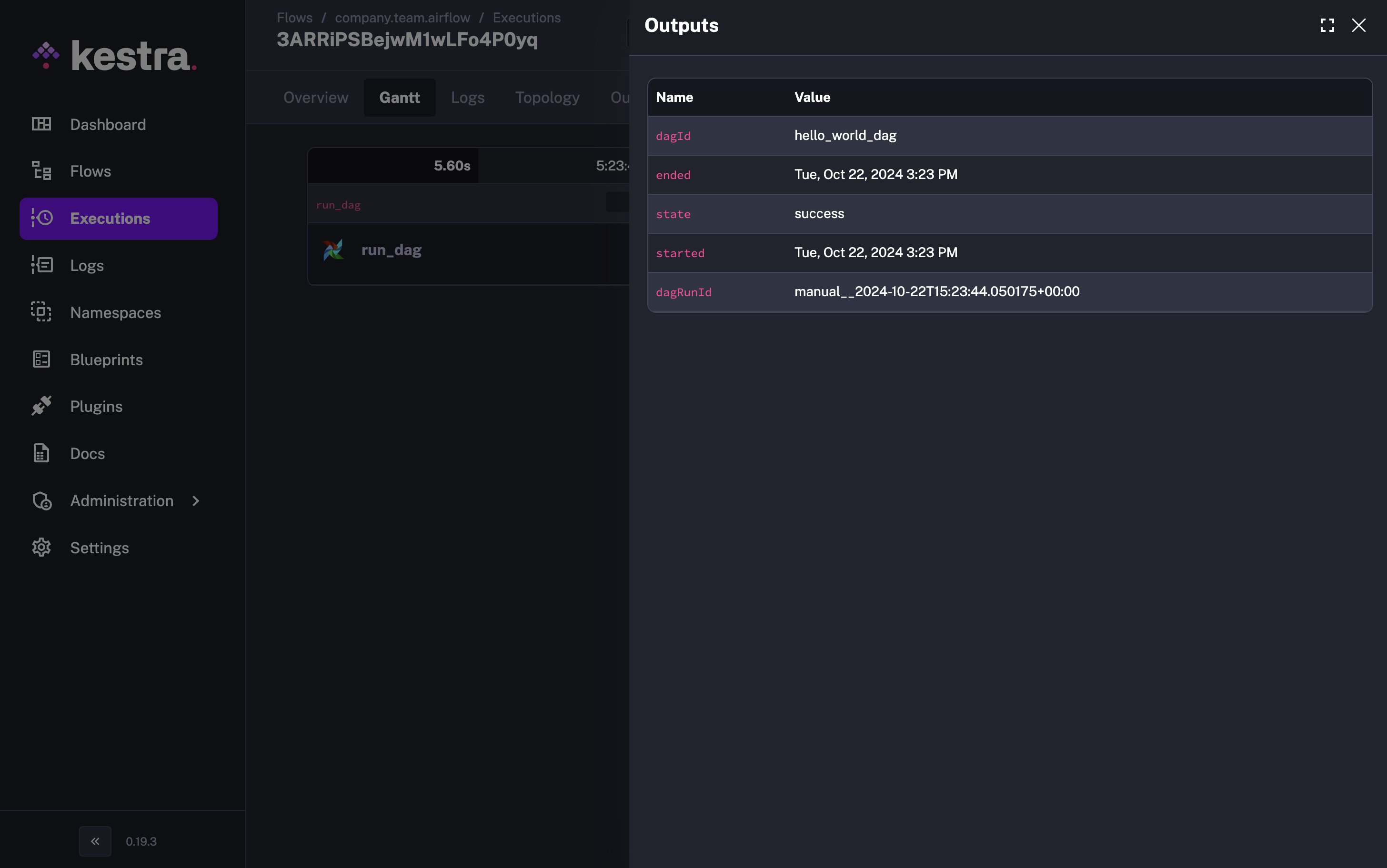

Kestra captures all the DAG run information.

Kestra captures all the DAG run information.

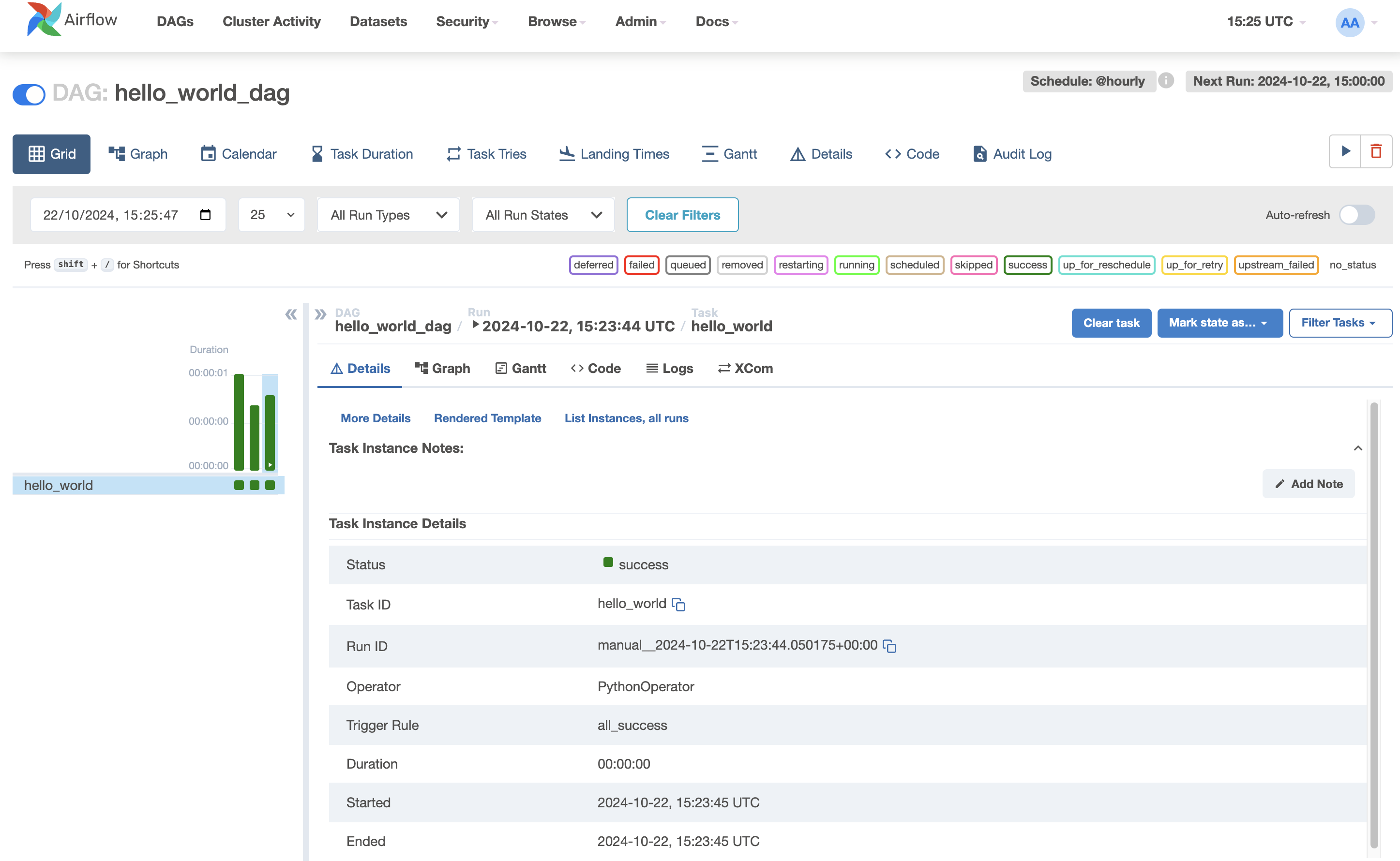

On the other side, the Airflow DAG is triggered successfully.

On the other side, the Airflow DAG is triggered successfully.

Once integrated, Kestra becomes the central control plane for orchestrating workflows across your stack. Whether it’s managing complex real-time data pipelines or orchestrating legacy Airflow jobs, you can monitor all executions through Kestra’s dashboard, which offers deeper insights and enhanced monitoring compared to Airflow’s built-in tools. Centralized logging, real-time outputs, and cross-system execution history mean you’re not context-switching between dashboards to understand what’s running.

Kestra’s declarative approach removes the glue code that makes Airflow DAGs complex. Instead of managing Python dependencies and intricate DAG structures, you define workflows in a simple, readable format and manage them directly through the UI.

Airflow is known for its complexity in constructing DAGs, especially when basic workflows end up requiring complicated Python scripts. With Kestra, you can streamline your workflows with a declarative syntax, eliminating the need for glue code and additional scripts.

Here’s how Kestra helps you:

The Strangler Fig approach handles the tactical question: how do you move workflows without breaking production? There’s a harder one underneath it. Teams upgrading to Airflow 3 are not just modernizing their tooling. They’re reaffirming a commitment to a Python-first, scheduler-centric architecture where execution is coupled to orchestration. That may still be the right call for your team, but the Airflow 2 EOL is worth treating as a moment for deliberate evaluation rather than routine maintenance.

Our Airflow migration whitepaper covers the decision in full: the real cost of the Airflow 3 upgrade, what a declarative alternative looks like at scale, and how teams like Crédit Agricole migrated across 100+ clusters without a big-bang cutover. Free to download.

If you’d rather talk through your specific setup, book a demo.

If you have any questions, reach out via Slack or open a GitHub issue. If you like the project, give us a GitHub star and join the community.

Stay up to date with the latest features and changes to Kestra